Jailbreaking my 2012 Kindle Paperwhite

I usually prefer to read paper books and get them from my local library when I can, but I have an old Kindle Paperwhite that I generally load up with books to read while travelling. It saves a lot of hassle, bulk and weight compared to carrying around several paperbacks.

I had previously looked into custom Kindle firmware and found people saying that such things don’t exist, but with Amazon pulling support for old Kindles I had another look and realised that I’d missed something: There may not be fully custom firmware for Kindles, but there is jailbreaks and custom software. I took me a while to get it working, navigating various Mobile Read forum threads to piece together steps that worked and now that I have

Getting device information

The first thing you need is the serial number of your Kindle to determine the exact model type and compare it to the Mobile Read wiki page on Kindle serial numbers. You can get this on Amazon’s website while logged into the account that the Kindle is registered to or from the device itself.

Confusingly, on my Kindle you must access this by ☰Menu button, selecting Settings, then pressing the ☰Menu button again (which pops up a different menu when pressed from inside the settings screen) and then selecting Device Info. The wiki page does mention this too, but I missed it at first. The device info popup also has the firmware version, which for me was 5.6.1.1, which will also be important.

In my case the serial number starts with B024. Amazon’s site describes it as “Kindle Paperwhite (5th Generation)” and the Mobile Read wiki calls it “Kindle PaperWhite WiFi”, but more importantly the nickname that is used in Mobile Read’s guides is

Grabbing the software

The jailbreak method I used for my

These can all be grabbed here:

- Kindle firmware version 5.4.4.2

- Kindle firmware version 5.6.1.1

K5 Jailbreak - Latest version at time of writing iskindle-jailbreak-1.16.N-r19426.tar.xzKUAL - Same link as the one above. Latest version at time of writing isKUAL-v2.7.37-gfcb45b5-20250419.tar.xz- Jailbreak fix -

Update_hotfix_universal.bin KO Reader - For thePW1 , the file labelledkoreader-kindle-v####.##.zip- Stardict English dictionary -

dict-en-en.zip(not the one labelled Kindle)

Factory reset (do not actually do this)

To ensure that these steps worked from a blank slate I repeated them after doing a factory reset on my Kindle. As Amazon are ending support for older Kindles if you do a factory reset after the

If Amazon haven’t turned off the servers yet and you are logging into an old Kindle and you have two-factor authentication enabled then login will fail if you try to log in with just your username and password, but you can append your six-digit authentication token to the end of your password and it should work.

Installation

-

Getting ready

- If Amazon haven’t already turned off the servers then now is the time to download any books that you want to keep from your Amazon account onto the Kindle.

- Once that’s done enable aeroplane mode by navigating to ☰Menu→Settings→Aeroplane Mode.

-

Downgrade the firmware to 5.4.4

- Connect the Kindle to your computer with a Micro-

USB cable, it should mount the same as an external drive or memory stick. If it doesn’t and only starts charging try using different Micro-USB cables until you have one that does data transfer. - Copy

update_kindle_5.4.4.binto the root directory of the Kindle. - Without ejecting the Kindle or unplugging the

USB cable hold down the power button of the Kindle until the charging light goes out and it unmounts from thePC . This took about twelve seconds for me. - When you release the power button the Kindle should restart and begin installing the new firmware after a few seconds.

- Connect the Kindle to your computer with a Micro-

-

Install the jailbreak

- Once the firmware downgrade is finished and Kindle has restarted check that the downgrade was successful by going to ☰Menu→Settings→☰Menu→Device Info and ensuring the firmware version is now 5.4.4.2.

- Reconnect the Kindle to your

PC . - Extract the contents of

kindle-jailbreak-1.16.N-r19426.tar.xzand from that extract the contents ofkindle-5.4-jailbreak.zipinto the root directory of the Kindle. - Eject the Kindle from your

PC and unplug theUSB cable. - Install the jailbreak from your Kindle by navigating ☰Menu→Settings→☰Menu→Update Your Kindle. If the option is greyed out make sure that aeroplane mode is on, reconnect your Kindle to your

PC and double check that all the contents ofkindle-5.4-jailbreak.zip(includingUpdate_jb_$(cd mnt && cd us && sh jb.sh).bin) are still in the root directory of your Kindle (copy them over again if not) and try again. - If the jailbreak was successful then some text saying JAILBREAK should appear at the bottom of the screen.

-

Install

KO Reader andKUAL - Reconnect the Kindle to your

PC . - Extract the contents of

koreader-kindle-v####.##.zipto the root directory of the Kindle. - Extract the contents of

dict-en-en.zipto/koreader/data/dict/ - From

KUAL-v2.7.37-gfcb45b5-20250419.tar.xzextractKUAL-KDK-2.0.azw2and copy it to/documents/

- Reconnect the Kindle to your

-

Upgrade back to 5.6.1.1

- Copy

update_kindle_5.6.1.1.binto the root directory of the Kindle. - Eject the Kindle from your

PC and unplug theUSB cable. - Navigate to ☰Menu→Settings→☰Menu→Update Your Kindle and wait for the upgrade to install.

- Copy

-

Install the jailbreak hotfix

- Reconnect the Kindle to your

PC . - Copy

Update_hotfix_universal.binto the root directory of the Kindle. - Eject the Kindle from your

PC and unplug theUSB cable. - Navigate to ☰Menu→Settings→☰Menu→Update Your Kindle one last time.

- Reconnect the Kindle to your

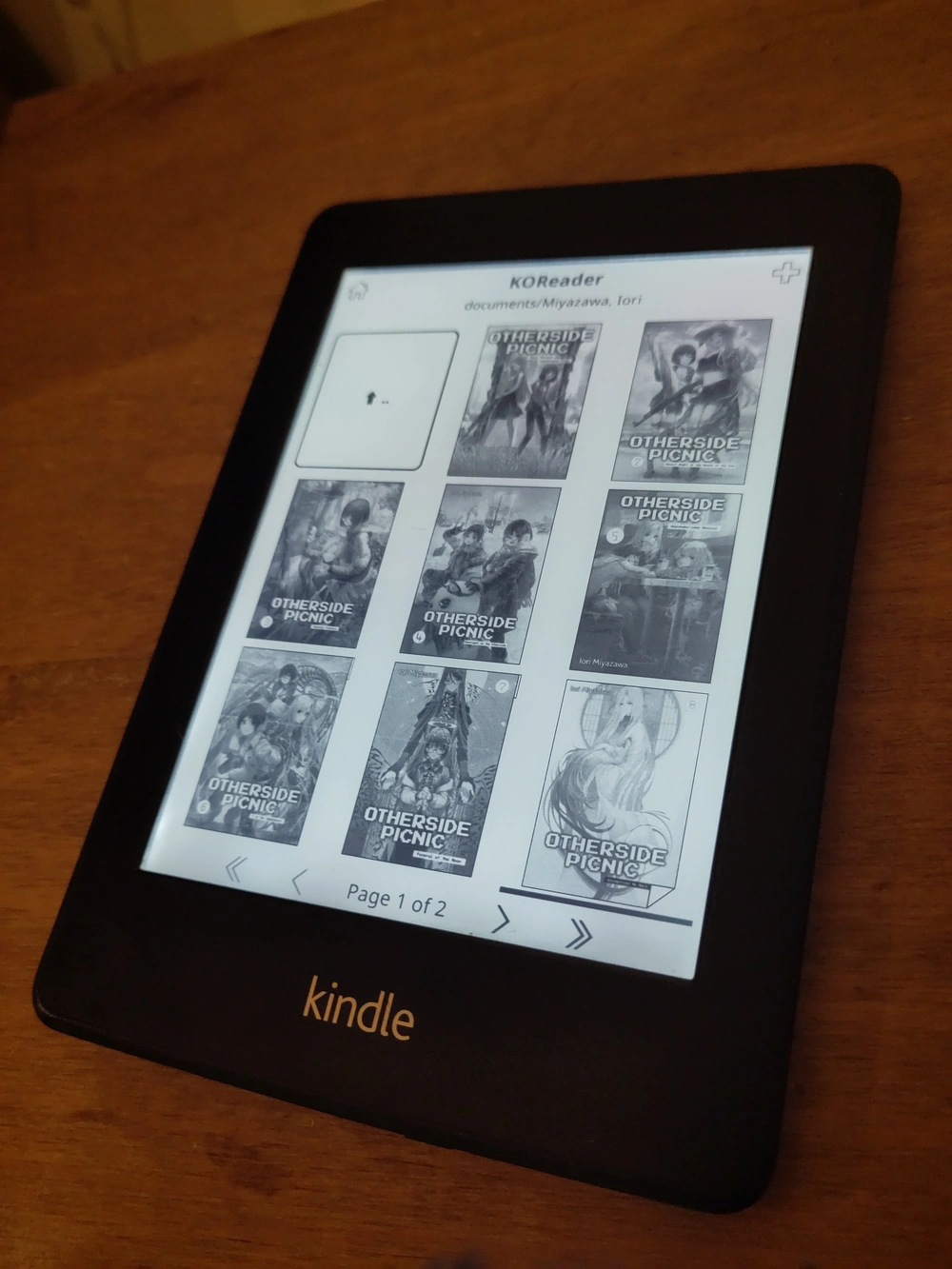

All going well there should now be a Kindle Launcher/

I use Calibre to organise my e-books and it can handle transferring them over to the Kindle too, though by default it only sends books in formats the Kindle can natively read. You can change what formats it will transfer over as well as the folder structure it uses from the settings for the Kindle plugin under Preferences→Plugins.